AI-driven remediation

To make our tool even more accessible for IoT security newcomers, we integrated some AI magic under the hood. It will help you to get more insights about zero-day and known vulnerabilities.

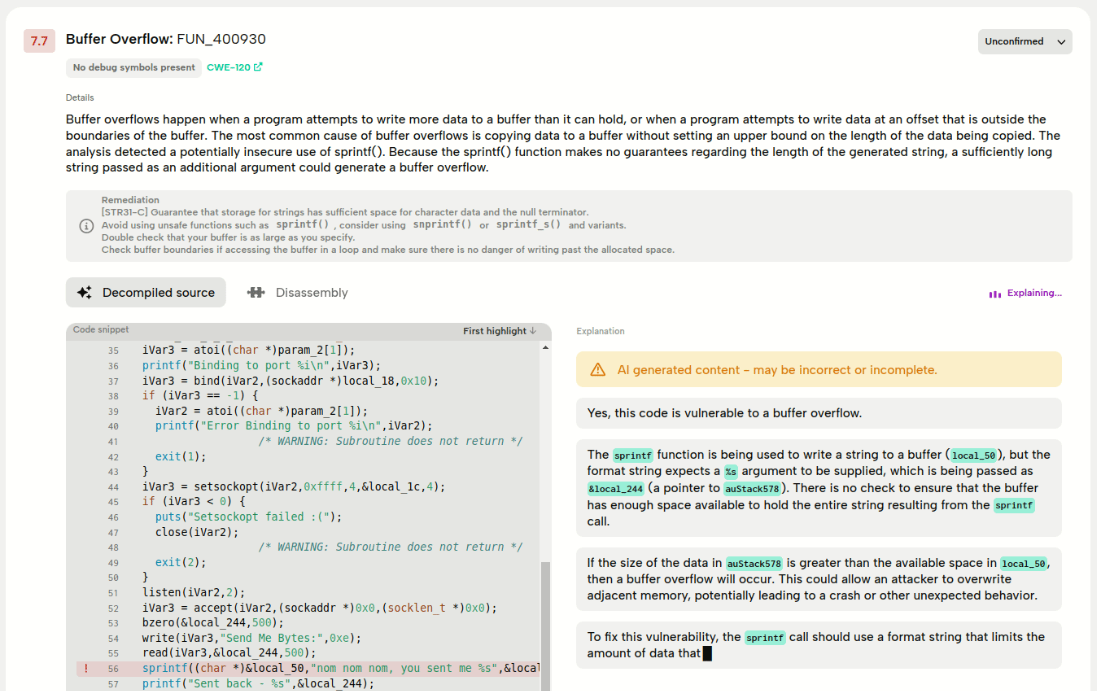

The AI assistant will try to explain why the code is vulnerable and suggest ways to fix it. The answer is streamed and usually contains the decompiled code with a possible fix for the issue (this should be enough to point you in the right direction). Our experience is that it usually gives insightful and actionable advice, but there is no guarantee that the answer will be correct, and at times false positives can show up in the results as well.

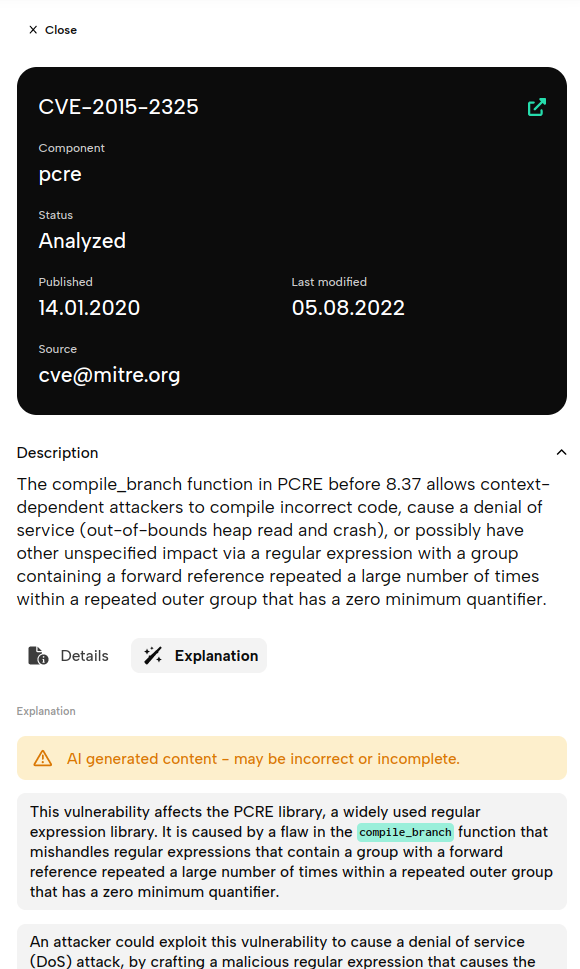

Known Vulnerabilities

For known vulnerabilities (findings with the default status Found) the answer will be streamed when you hit Explanation. If an explanation was already requested by you or another BugProve user a cached version of that explanation pops up right away.

Zero-day Vulnerabilities

For potential zero-days (findings with the status Unconfirmed) we will need your consent before sending the code fragment to OpenAI servers. Depending on the circumstances you have two choices here:

- Explanation: instantly streams an explanation

- Show Explanation: shows a cached explanation that was already requested by you or someone else in your organization

- Regenerate: will stream a new explanation without asking consent